Our Research

Ongoing Projects

An Auditory Lexicon in the Brain

Recognizing spoken words is a vital to daily life. Identifying the relevant neural computations and units over which they are performed is critical to furthering our understanding of how the brain performs this process. Research from both psycholinguistics and audition in the brain predict hierarchical speech representations, viz. from phonetic features, to phonemes, syllables, and finally spoken words.

Studies of visual word recognition have found evidence for whole word (lexical) and sublexical representations in the brain, but similar evidence has not been found for spoken words.Building on our work on written word representations (e.g., Glezer et al., Neuron, 2009; J Neuroscience, 2015; NeuroImage, 2016), this project leverages functional magnetic resonance imaging (fMRI) and a rapid adaptation paradigm to investigate the existence and location of spoken word lexicon. Results can provide further evidence to adjudicate between various models of speech recognition in the brain.

Learning Speech Through Touch

Our sensory organs are exquisitely designed for bringing sensory information about our world to our brain. However, it is our brain’s ability to process this information that allow us to actually perceive the world. The goal of sensory substitution is to use one sensory system to provide information to the brain that is usually delivered via another sense. For example, braille conveys information about visual words through touch. In this project, we investigated the neural bases of learning to associate vibrotactile stimuli as spoken words. We use fMRI and representational similarity analysis (RSA) to test under what connections trained vibrotactile stimuli engage auditory word representations in the brain. Results can provide further evidence about interactions between sensory systems and principles of multisensory learning.

Re-analyzing open-science datasets for speech processing

This project is part of a broad project to test hypotheses about multimodal speech perception using a large open-science dataset (MOUS – “Mother of all unification studies”, Schoffelen et al., 2019). We are exploring how advanced neuroimaging analysis methods, including multivariate pattern analysis and functional connectivity analyses, can be used to investigate novel questions beyond those envisioned in the original study design.

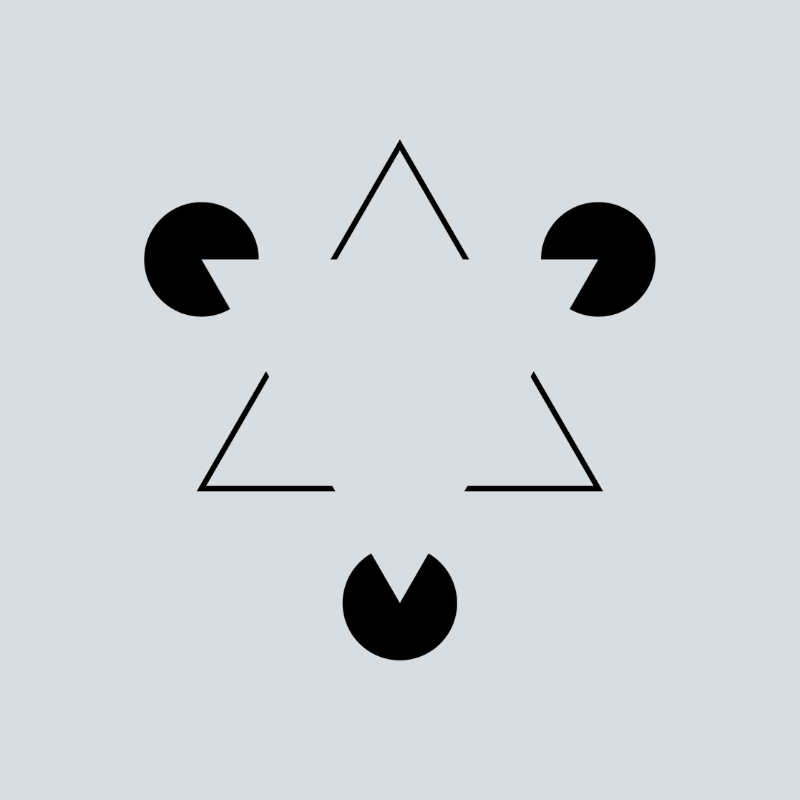

On the Specificity of Descending Intracortical Connections

Descending connections are believed to be involved in sensory, motor and intellectual processes including selective attention, object recognition, disambiguation, mental imagery, preparation for movement, visuo-motor coordination, visual surround suppression, efference copy and corollary discharge, and a variety of Gestalt phenomena.

What are the specificities of descending connections, and how do they arise? Answers will be complicated by the multiplicity of targets of descending connections onto pyramidal cells and inhibitory interneurons in superficial and deep layers of the cortex and by the multiplicity of sources of descending connections from both superficial and deep pyramidal cells in higher cortical areas. This project involves a review of the specificities of descending intracortical connections and how those specificities are acquired.

Investigating the Neural Bases of Internal Models During Speech Production

Language is a uniquely sophisticated ability of the human brain that is critical for communication with the outside world. Spoken language processing can be broken down into two major components: speech perception and speech production. Both components have traditionally been studied separately, although it is obvious that they are intimately intertwined. Speech perception deals with the process of extracting meaning from heard speech (“sound-to-meaning”), whereas speech production orchestrates the activity of large sets of muscles to generate meaningful words and phrases (“meaning-to-articulation”). The neural bases of these processes, in particular the brain’s ability to create complex sound sequences for speech production, are still poorly understood. Recent major models share an overall “two-stream” architecture, with one processing pathway mediating speech perception, and another pathway participating in speech production. However, these models still have major disagreements regarding which brain regions are involved and how the two systems (perception and production) interact, and these disagreements are barriers to developing improved treatments of disorders involving speech perception and production deficits. We use multivariate imaging techniques, specifically functional magnetic resonance (fMRI) representational similarity analysis (RSA), fMRI-RSA, and electroencephalography representational similarity analysis (EEG-RSA), to test novel, model-based hypotheses regarding the spatial location and temporal dynamics of neural representations for speech production and speech perception. If you're interested in learning more, shoot Plamen an email at pn243 at Georgetown dot edu!

Next-Generation Machine Learning

There is growing realization that current deep learning algorithms, their remarkable successes notwithstanding, suffer from fundamental flaws. For instance, backpropagation-based deep learning, in contrast to human learning, requires large amounts of labeled data, and is ill-suited for incremental learning. In addition, as already pointed out more than 30 years ago by Francis Crick, backprop is implausible as a model of learning in the brain. Together with our collaborators at Lawrence Livermore National Labs, we are analyzing EEG data obtained while human participants are learning novel tasks to understand how supervised learning is accomplished in the brain's deep processing hierarchies.

our research

Learn more